The company is shipping its first-gen chip globally, with over 1000 cores at only 25 watts of power. Can it break into Generative AI?

Suddenly, AI has become the hottest investment and cocktail party topic de jour. But the estimates for power consumption are pretty outrageous; I’ve seen some projections that Large Language Models like GPT-4 could increase data center power usage by five-fold, which is not only a bad idea, but simply isn’t possible, affordable, nor even available. And the climate impact would be unacceptable. The University of Massachusetts Amherst found that training a state-of-the-art language model with 1 trillion parameters could require as much as 1.5 GW of power, which is equivalent to the power consumption of a small city.

Into this challenging space comes a few startups with a better idea, including Silicon-valley start-up Esperanto, which is now shipping its AI/HPC RISC-V platform. While we have covered Tenstorrent, Si-Five and Ventana Microsystems, which have impressive RISC-V IP and chiplets, Esperanto is already in-market with over 1000-cores of RISC-V chip that can span from the cloud to the edge and only consume some 25 watts of power or less.

Esperanto Technology

Esperanto’s first chip, now shipping globally, is built on TSMC 7nm technology, and includes a unique architecture with two different RISC-V cores. The 4 out-of-order “Maxion” cores per chip are capable of running an OS like Linux, which dole out parallel processing kernels to the smaller “Minion” cores to provide AI and HPC acceleration. Work is underway to enable this to become a “self-hosted” platform, using the Maxion cores to eliminate the X86 host CPU(s), lowing cost and power.

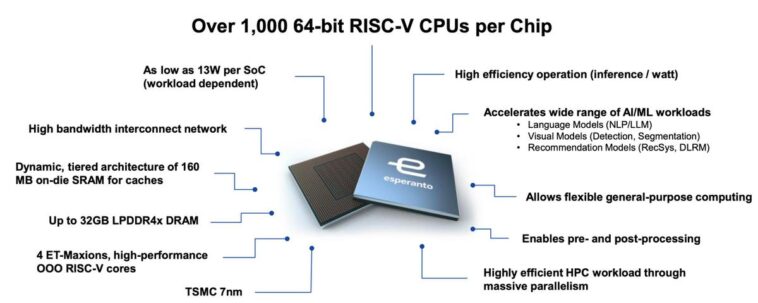

The ET-SoC-1 has over 1000 RISC-V cores and is built on TSMC 7nm fabrication.

Esperanto is currently shipping AI evaluation servers which deliver high performance with high energy efficiency affording low TCO. Penguin Computing likes what they see in Esperanto, working in collaboration with the chip designer to create a system in a standard 2U-high form factor. Each Esperanto server includes dual Xeon host processors and either 8 or 16 ET-SoC-1 PCIe cards. Each Esperanto PCIe card has over 1,000 64-bit RISC-V CPUs with attached vector/tensor units, delivering up to 16,000 RISC-V CPUs per server. Esperanto’s servers enable a variety of industry standard AI models, as well as the ability to run customers’ own models and data.

Summary statistics of the ET-SoC-1 device.

Esperanto began shipping its first SoC last summer, and has recently demonstrated running a range of large language models, including Meta’s open pre-trained transformer OPT on a single ET-SoC-1. “Generative AI is one of the latest advancements in machine learning, and we are pleased to contribute elements of our efforts in the area of large language models to the RISC-V research community,” said Art Swift, president and CEO at Esperanto Technologies. The requirements of LLMs and the learnings of current research in three generative AI verticals are key factors driving the definition of the second generation Esperanto SoC.

Esperanto has shared key findings of three customer trials conducted last year. Customer “A” demonstrated linear scaling from a single chip to a cluster. Customer “B” demonstrated strong performance and power efficiency vs the NVIDIA A100, and Customer “C” saw very strong performance across hundreds of evaluation parameters.

Strategically, Esperanto believes the convergence of HPC and AI creates a large opportunity for the company’s success. HPC customers are always anxious to try new processor technology and being able to perform well at very low power in both HPC and AI could be attractive to SuperComputing institutions looking to adopt RISC-V. Dave Ditzel, company co-founder and the co-inventor of the RISC processor architecture, will be at the ISC event next week in Hamburg discussing the architecture and its applicability to solving highly parallel applications.

Conclusions

We initially explored the ET-SoC-1 in April 2022, and the promise of an open-source RISC-V multi-core platform is now being tested and proven across AI and HPC application domains. It will still take some time for the necessary software to be ported and fine-tuned, and initial large-scale deployments to be procured, but the prospects look bright for both RISC-V in general and Esperanto in particular. We would note that some Arm customers are keen to try a technology with more flexible licensing terms and ability to customize the ISA for specific needs. This adds another reason why so many people are excited about RISC-V.

The RISC-V ride is open for business, and it will dramatically change the IT landscape over the next 5 years.

For more insights, check out our video with CEO Art Swift here:

Disclosures: This article expresses the opinions of the authors, and is not to be taken as advice to purchase from nor invest in the companies mentioned. Cambrian AI Research is fortunate to have many, if not most, semiconductor firms as our clients, including Blaize, Cadence Design, Cerebras, D-Matrix, Eliyan, Esperanto, FuriosaAI, Graphcore, GML, IBM, Intel, Mythic, NVIDIA, Qualcomm Technologies, Si-Five, SiMa.ai, Synopsys, Tenstorrent, and Ventana Microsystems. We have no investment positions in any of the companies mentioned in this article and do not plan to initiate any in the near future. For more information, please visit our website at https://cambrian-AI.com.